The landscape of AI image generation has evolved at a breakneck pace. Just a year ago, the struggle to generate realistic images with legible text, consistent characters, or coherent multi-character scenes was a daily frustration for digital creatives. We spent hours engineering complex, paragraph-long prompts, tweaking negative weights, and relying on external photobashing tools to fix the inevitable warped hands or gibberish signs. Fast forward to early 2026, and the playing field has fundamentally shifted. The release of the highly anticipated Nanobanana 2 (powered officially by the Gemini 3.1 Flash Image architecture) in February 2026 marks a watershed moment in digital content creation. This isn't just an evolutionary step; it is a profound leap forward in generative velocity, stunning cost-effectiveness, and practical real-world applicability that leaves older heavyweights scrambling to keep up.

This comprehensive 2026 review will dissect exactly why Nanobanana 2 has rapidly claimed the top spot on industry leaderboards—including the grueling Artificial Analysis text-to-image benchmark—and why it might genuinely be the only visual creation tool your marketing team, game studio, or design agency needs this year.

At its core, Nanobanana 2 offers a centralized, incredibly fast, cloud-native art studio. It deliberately integrates multiple cutting-edge video and image models beneath the hood, providing users with a highly convenient, seamless, one-stop AI creation experience directly in their browser without the need for expensive local hardware setups.

🚀 The Paradigm Shift: Unpacking the Core Features of Nanobanana 2

The AI community—from casual enthusiasts on X (formerly Twitter) to enterprise-level art directors—has been buzzing about Nanobanana 2 since its surprise launch window. The early anecdotal praise and subsequent rigorous independent benchmarks confirm the hype. Google DeepMind’s prevailing strategy with this specific release clearly pivots from purely experimental artistic exploration to high-volume, reliable, production-oriented industrial output. They have built a machine designed for professionals who need results yesterday.

1. Exceptional Speed and Unmatched Cost-Efficiency

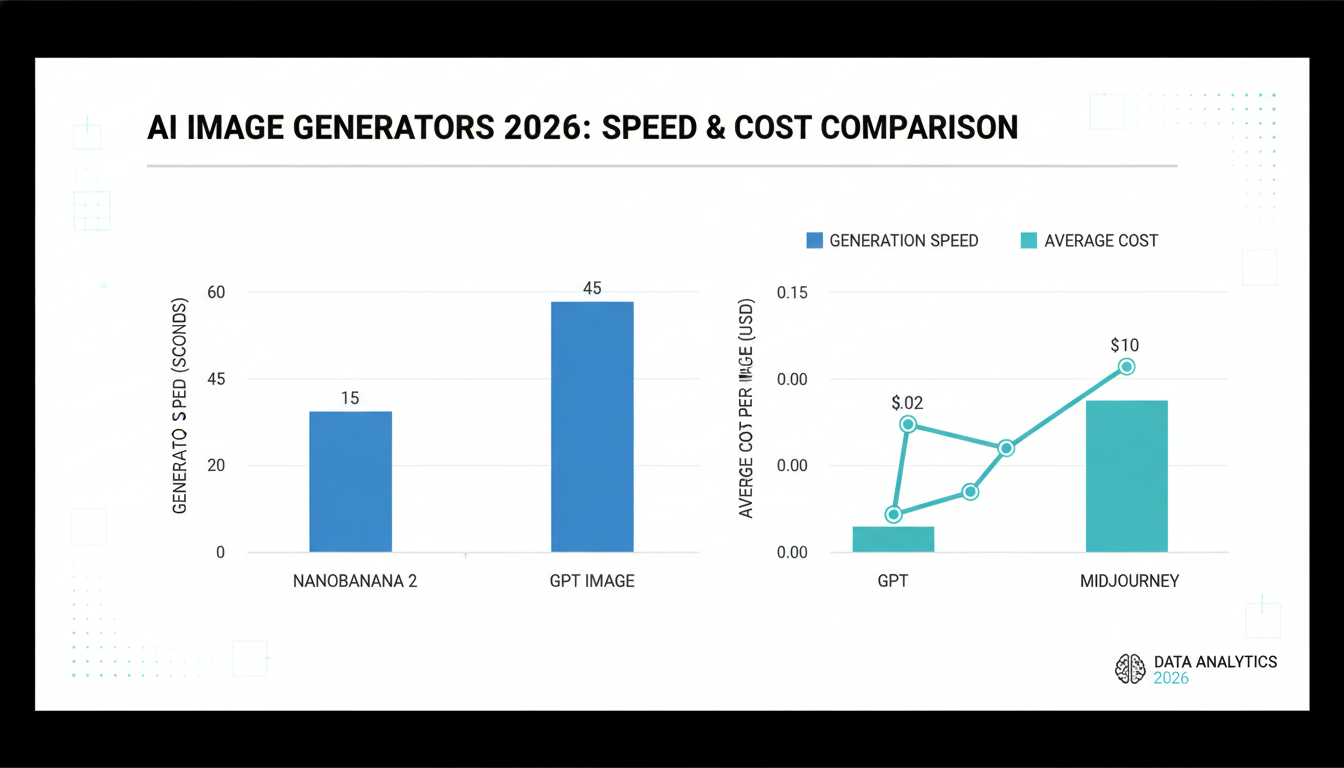

The most immediate, visceral, and striking difference when migrating to Nanobanana 2 from an older generation model (like Midjourney v5 or early DALL-E) is its sheer, unrelenting velocity. By building the infrastructure heavily around the highly optimized Gemini Flash architecture, Nanobanana 2 dramatically outperforms both its predecessors and its current direct competitors in the market.

- Lightning Generation Response: Forget the progress bar. Complex, high-resolution (up to 4K fidelity) images are now routinely generated in approximately 3 to 15 seconds, depending on server load and prompt complexity.

- A Staggering Benchmark Context: To put this speed into perspective, this optimization makes Nanobanana 2 roughly 2 to 3 times faster than its premium sibling, the highly capable Nano Banana Pro model. More impressively, when placed head-to-head with GPT Image 1 (the visual generation component of GPT-4o), Nanobanana 2 is an astonishing 15 to 20 times faster at executing visual rendering tasks.

- Unrivaled Cost-Effective Scaling: For production pipelines, creative agencies, and indie development teams, time directly equates to money. Nanobanana 2 democratizes high-frequency, high-iteration creation with API costs running as exceptionally low as $0.03 per image. Furthermore, its strategic availability and integration on the broader Gemini consumer app make pro-level artistic quality totally accessible to casual users and solopreneurs at no additional overarching cost.

When you are rapidly iterating on major campaign concepts, drafting hundreds of panels for marketing storyboards, or just trying to nail down the exact lighting for a social media asset, the complete elimination of the "loading bar wait" entirely changes the rhythm of your creative workflow. You can now generate, tweak the prompt, and regenerate faster than a traditional artist could wash a paintbrush.

2. Flawless Text Rendering: Conquering the Typography Crisis

For the past three years of the generative AI boom, prompting a model to generate an image featuring specific, readable text often felt like a frustrating game of rolling the dice. You might ask for a "Neon sign that says 'Open 24/7' above a vintage diner," and receive a beautiful image where the sign proudly displays glowing, illegible alien runes resembling "Opeen 24/H."

Nanobanana 2 has decisively and conclusively solved this pervasive typography crisis.

The model demonstrates a massive breakthrough in its ability to consistently render highly legible, perfectly spelled text within densely generated images. Crucially, it doesn't just treat text as a flat overlay or an afterthought; the model demonstrably understands the deep physical context of the typography within the three-dimensional scene. Whether your prompt demands the text to be heavily embossed onto the rough surface of a worn leather biker jacket, spray-painted over a textured, gritty brick wall in an alleyway, or written hastily in smudged chalk on a boutique cafe's A-Frame menu board, Nanobanana 2 balances breathtaking photorealism with precise spelling. It matches the ambient environmental lighting, the specific shadows, and the camera perspective flawlessly, meaning the text intrinsically belongs in the scene rather than looking pasted on in Photoshop later.

3. Unprecedented Subject, Object, and Character Consistency

Sequential visual storytelling—whether that involves producing fully-fledged comic books, drafting comprehensive video game UI flow storyboards, or orchestrating multi-channel narrative marketing campaigns—absolutely requires a rock-solid foundation of visual consistency.

With almost every previous generation of open-source and proprietary models, maintaining a character’s exact facial structure, clothing, and defining features across different scenes was an exhausting chore. It actively required frustrating, complex technical workarounds, meticulous random seed numerical tracking, highly specific masking techniques, or relying heavily on third-party plugins and LoRAs (Low-Rank Adaptations) just to keep the protagonist looking somewhat identical from panel one to panel two.

Nanobanana 2 handles this monumental challenge natively, elegantly, and exceptionally well right out of the box.

Extensive community benchmarks and stress tests in Q1 2026 indicate that the model is comfortably capable of maintaining the strict visual integrity of up to five entirely distinct human (or non-human) characters—simultaneously—and up to 14 specific, detailed objects across multiple discrete image generations within a single narrative workflow session.

This is nothing short of a paradigm-shifting game-changer for narrative creation. It allows a solo creator or a small design team to introduce a single character, firmly establish their specific design (down to the shape of their earrings and the exact cut of their jacket), and then effortlessly place that exact same character into a bustling cyberpunk city market one moment, and a quiet, sunlit Parisian cafe the next, entirely without losing any of their defining visual features or resorting to external software.

4. Real-Time Web Grounding and Contextual Awareness Integration

While speed and typography steal the headlines, perhaps the most profoundly underrated and forward-thinking feature of Nanobanana 2 is its deep backend integration with Google’s immense, real-time web search knowledge graph.

Unlike traditional, standalone offline models that are strictly and permanently limited to the rigid data they were trained on (the dreaded "knowledge cutoff date"), Nanobanana 2 possesses the unique ability to dynamically "reach out" into the live internet. It can actively incorporate unfolding current events, the absolute latest viral fashion trends, newly emerging contemporary architectural styles, or even extremely recent consumer electronics product announcements directly into its generations.

This dynamic "grounding" mechanism drastically improves the factual accuracy, cultural relevance, and immediate usefulness of the visual output. If you ask the model to generate a concept image related to an event that happened just three days ago, it doesn't hallucinatory guess—it utilizes the web to understand the context. This makes Nanobanana 2 an absolutely invaluable, irreplaceable tool for fast-paced news organizations, social media trend forecasters, and agile digital marketing teams who operate in environments where being culturally relevant "in the moment" is the entire point.

🏆 The Ultimate 2026 Benchmark: Nanobanana 2 vs. The Industry Competition

It's easy to look at promotional materials in isolation and be impressed. But how does Nanobanana 2 actually stack up against the other established industry heavyweights fighting for dominance in the intensely competitive landscape of early 2026? While individual artistic needs, tastes, and specific pipeline requirements may naturally vary, a very clear consensus has officially emerged among power users, early enterprise adopters, and independent technical reviewers across the board.

The data speaks for itself. Below, we've broken down the key battlegrounds where these models compete.

Table 1: Comprehensive 2026 AI Image Generator Benchmark Comparison

| Core Feature / Key Metric | Nanobanana 2 (Gemini 3.1 Flash Image) | Nano Banana Pro (Heavy Duty) | GPT Image 1 (via GPT-4o) | Midjourney v6 (Artistic Bias) |

|---|---|---|---|---|

| Average Generation Speed | ⚡ 3 - 15 Seconds (Industry Fastest) | 10 - 30 Seconds | 45+ Seconds (Noticeably slower) | 30 - 60 Seconds (Depends on server/upscale) |

| Real-Time World Knowledge | Extremely High (Live Web Grounded natively) | High (Grounded but slower to retrieve) | Moderate (Relies on chat interface search) | None (Locked to training data cutoff) |

| Overall Stylistic Flexibility | Extremely High (Adapts easily to any prompt) | High (Heavy focus on absolute realism) | Moderate (Tends towards specific 'AI' aesthetics) | High (Strong bias towards fine art/cinematic) |

| Complex Text & Typography | ⭐⭐⭐⭐⭐ Excellent (Flawless Integration) | ⭐⭐⭐⭐ Very Good (Minor errors) | ⭐⭐⭐ Moderate to Good | ⭐⭐⭐ Good (Requires specific prompting) |

| Inherent Character Consistency | Native Support (Maintains up to 5 characters) | Requires complex prompting structures | Weak (Struggles with scene-to-scene consistency) | Relies heavily on external /cref Discord tags |

| Optimal / Best Use Case Scenario | Rapid Production, Agile Marketing, Fast Storyboarding | Highly Complex, High-Fidelity Masterpiece Renders | General Assistant Tasks, Casual Diagramming | Fine Art, Highly Stylized Thematic Concepts |

| Estimated Base Cost Structure | $0.03 / image (Incredibly Cost-Effective/Scalable) | Premium Tier Pricing | Premium Subscription Tier | Closed Subscription Based Only |

The Definitive Verdict:

While the heavyweight Nano Banana Pro model may still technically hold a razor-thin, slight edge for absolute maximum photorealistic fidelity when rendering incredibly complex, hyper-detailed macro scenes (like the intricate pores on a face or the complex reflections of a multi-faceted diamond), Nanobanana 2 is, without question, the vastly superior day-to-day creative workhorse.

It comfortably and significantly outperforms GPT-4o in both sheer generation speed and the critical metric of typographic accuracy. When compared directly to the artistic darling Midjourney v6, Nanobanana 2 is drastically faster—especially when rendering at high 4K resolutions—and balances environmental text integration far more effectively without forcing the user to learn arcane, complex Discord command-line inputs. For 95% of professional use cases, Nanobanana 2 is simply the better, more efficient tool.

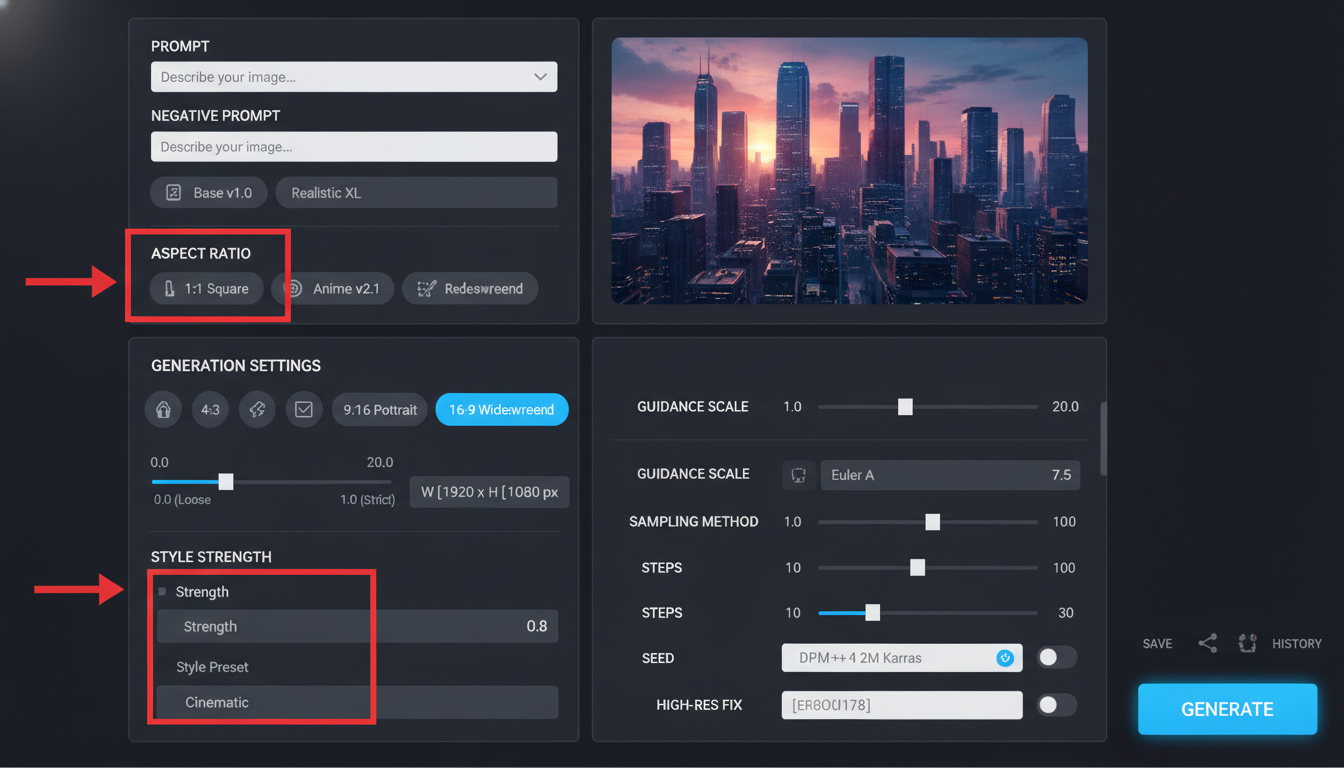

⚙️ Deep Dive: Mastering Optimal Parameter Configurations for Nanobanana 2

Having a powerful engine is one thing; knowing how to tune it for the track is another. To extract the absolute best, most breathtaking performance from the platform, understanding precisely how to configure its myriad of settings for specific artistic outputs is absolutely crucial. Because Nanobanana 2 ingeniously integrates multiple complex models in its backend, knowing how to properly steer it yields professional, publish-ready results on the first attempt, saving both time and API credits.

Below is an exclusive guide designed to provide maximum information gain, helping you bypass the learning curve and jump straight into professional production.

Table 2: The Expert's Guide to Recommended Parameter Configurations by Use Case

| Target Output Use Case / Specific Industry | Recommended Aspect Ratio (AR) | Suggested Prompt Detailing Level | Core Style Alignment Focus | Essential Key Modifier Suggestions (Include in Prompt) |

|---|---|---|---|---|

| E-commerce & Dynamic Product Renders | 1:1 (Instagram) or 4:5 (Pinterest/Stories) | Very High (Strictly specify lighting direction, material texture, and background) | Studio Product Photography, 3D Commercial Render | "Softbox lighting," "Macro photography lens," "Clean white seamless background," "Octane Render," "Subsurface scattering," "High gloss finish." |

| Social Media Banners (X, LinkedIn Headers) | 3:1 (Wide) or 8:1 (Extreme Ultra-Wide) | Moderate (Prioritize clean layout, negative space for text, and clear focal points) | Modern Graphic Design, Vibrant Editorial | "Vast negative space on the right side for typography overlay," "Vector flat illustration," "High contrast corporate minimalism," "Brand colors." |

| Sequential Comic Books & Storyboarding | 2:3 (Traditional Page) or 16:9 (Cinematic) | High (Specify character traits meticulously, control camera angle and lighting explicitly) | Cinematic Noir, Line Art, Japanese Cell Shaded | "Consistent character [Name]," "Dynamic low-angle shot," "Graphic novel style," "Heavy ink wash," "Chiaroscuro lighting," "Speed lines." |

| Web Design & Interactive Hero Sections | 16:9 (Desktop) or 21:9 (Ultrawide Monitor) | Moderate (Focus heavily on overall mood, UX/UI structure, and coherent color palettes) | Modern Tech Minimalist, Glassmorphism, B2B SaaS | "UI/UX desktop mockup layout," "Glassmorphism elements," "Abstract fluid gradient background," "Corporate sleek," "Clean sans-serif typography integration." |

🌍 Real-World Applications: How Major Industries are Rapidly Adapting in 2026

The theoretical capabilities and stark benchmark numbers of Nanobanana 2 are undoubtedly impressive on paper, but its true, undeniable value lies in exactly how it is actively disrupting and fundamentally rewiring traditional creative workflows across various major sectors right now, in real-time.

1. Revolutionizing Marketing and High-Paced Advertising Agencies

Modern advertising agencies are operating under a constant, crushing pressure to deliver a massive volume of highly targeted assets in increasingly shrinking timeframes. With the integration of Nanobanana 2 into their pipelines, the traditional A/B testing phase has not just been optimized; it has been completely transformed.

Instead of waiting agonizing days for a senior designer to manually mock up three different conceptual ad directions, a marketing coordinator can now independently generate thirty distinct variations—complete with the brand's exact slogan flawlessly rendered onto the product packaging or a billboard within the scene—in under five minutes flat. Furthermore, because Nanobanana 2 deeply integrates real-time web knowledge, ongoing campaigns can instantly pivot. If a specific aesthetic or a new meme goes viral on TikTok on a Tuesday morning, the brand can have a perfectly rendered, highly relevant visual asset responding to it published by Tuesday afternoon. This level of agility was previously impossible.

2. Empowering Indie Game Developers and UI/UX Designers

Producing high-quality concept art, environmental backgrounds, and thousands of UI asset generations have historically been massive, budget-draining bottlenecks in game development. Nanobanana 2 allows small, scrappy indie development teams to punch significantly above their weight class, competing visually with AAA studios.

By strategically locking in a specific aspect ratio and feeding the model a highly consistent style prompt (for example, "isometric 16-bit pixel art" or "gritty, rusty cyberpunk UI elements with neon accents"), developers can generate hundreds of highly cohesive functional assets—ranging from tiny inventory icons to massive, scrolling background parallax textures—in a single streamlined afternoon session. Additionally, the brand-new robust 3D imaging capabilities introduced in this specific 2.0 version allow for the rapid, dynamic prototyping of character base meshes and complex environment props long before a 3D modeler needs to open Maya or Blender.

3. Liberating Independent Creators, Authors, and Videographers

Independent writers attempting to visualize the vast worlds they are building, or solo YouTube creators desperately needing high-fidelity, high-click-through-rate thumbnails for daily uploads, no longer need to rely on expensive stock photo subscriptions or commission custom, expensive artwork for every single project piece.

By heavily utilizing the model's unprecedented multi-character consistency feature natively, a fantasy novelist can systematically generate an entire visual "Lookbook" or "Bible" of their entire cast. They can ensure that the protagonist's specific scar over their left eye and the exact intricate design of their armor remains absolutely identical, whether they are generating an image of them standing bravely in a burning forest or quietly commanding a futuristic starship bridge.

🚀 Pushing the Absolute Boundaries: Exploring Extremes and Edge Cases

While Nanobanana 2 obviously excels at standard, everyday tasks like generating stock photos or simple conceptual art, stress-testing its absolute limits reveals the true depth of its underlying neural architecture. When pushed to the edge, the model doesn't break; it adapts.

Mastering Extreme and Unusual Aspect Ratios: Standard, older AI image generators often completely fall apart when pushed beyond the safe confines of a standard 16:9 or 1:1 square. If you asked an older model for a panoramic shot, it tended to hallucinate bizarre, duplicated bodies, elongated cars, or stretch textures unrecognizably to fill the space. Nanobanana 2, however, handles extreme, highly demanding aspect ratios like an 8:1 (which is completely perfect for highly immersive wide website headers or wrap-around VR textures) or a 1:8 (which is the ideal dimension for tall, scrolling Pinterest infographics or mobile-first landing pages) with remarkable, inherent spatial awareness. It doesn't stretch the image; it intelligently composes the scene logically within the harsh geometric constraints provided.

Calculating Complex Global Illumination and Physics-Based Reflections: Where older generative models consistently failed to accurately render the complex physical refraction of light bending through a textured glass of water, or the chaotic, scattered neon reflections bouncing off a wet, rain-slicked cobblestone street at night, Nanobanana 2 handles these incredibly complex physics calculations entirely naturally. The result is breathtakingly realistic global illumination, accurate ambient occlusion, and highly convincing bounce lighting that makes the final output look less like an AI generation and far more like a professional photograph captured on an expensive DSLR camera.

🏁 Conclusion: The Undeniable Future of Creation is Already Here

As we step back and analyze the digital landscape in 2026, one thing is glaringly, undeniably evident: the frustrating era of slow, financially expensive, and technically finicky AI image generation is officially over.

Google DeepMind has delivered an absolute masterclass in balancing high-end, mathematically complex capabilities with pure, intuitive usability. By systematically identifying and addressing the most critical creative pain points of the last three years—specifically legibility in text rendering and concrete subject consistency—and by aggressively supercharging the overall generation speed via the new Flash architecture, they have created a tool that feels less like a fun, chaotic experiment and far more like an absolutely essential, mandatory utility for all modern digital work.

For any professional operating in the visual space, Nanobanana 2 offers a robust, stable, cloud-native ecosystem. It elegantly integrates the scattered tools you previously needed—upscalers, face-fixers, text-renderers—into one seamless, rapid interface.

Whether you are a social media manager frantically generating a quick meme for a trending topic, an agency art director building a comprehensive corporate branding package, or a lead designer sketching out the initial concept art for the next blockbuster video game franchise, the technology formally known as Gemini 3.1 Flash Image represents the current, undisputed pinnacle of practical, high-speed, production-ready AI creation.

Your imagination, your workflow, and your output are no longer severely bottle-necked by local processing power, exorbitant subscription costs, or frustrating technical limitations. The platform is ready. The only remaining question left is what you will choose to create next.

Are you ready to experience the fastest, most capable, and most intelligent AI art studio currently available on the web?